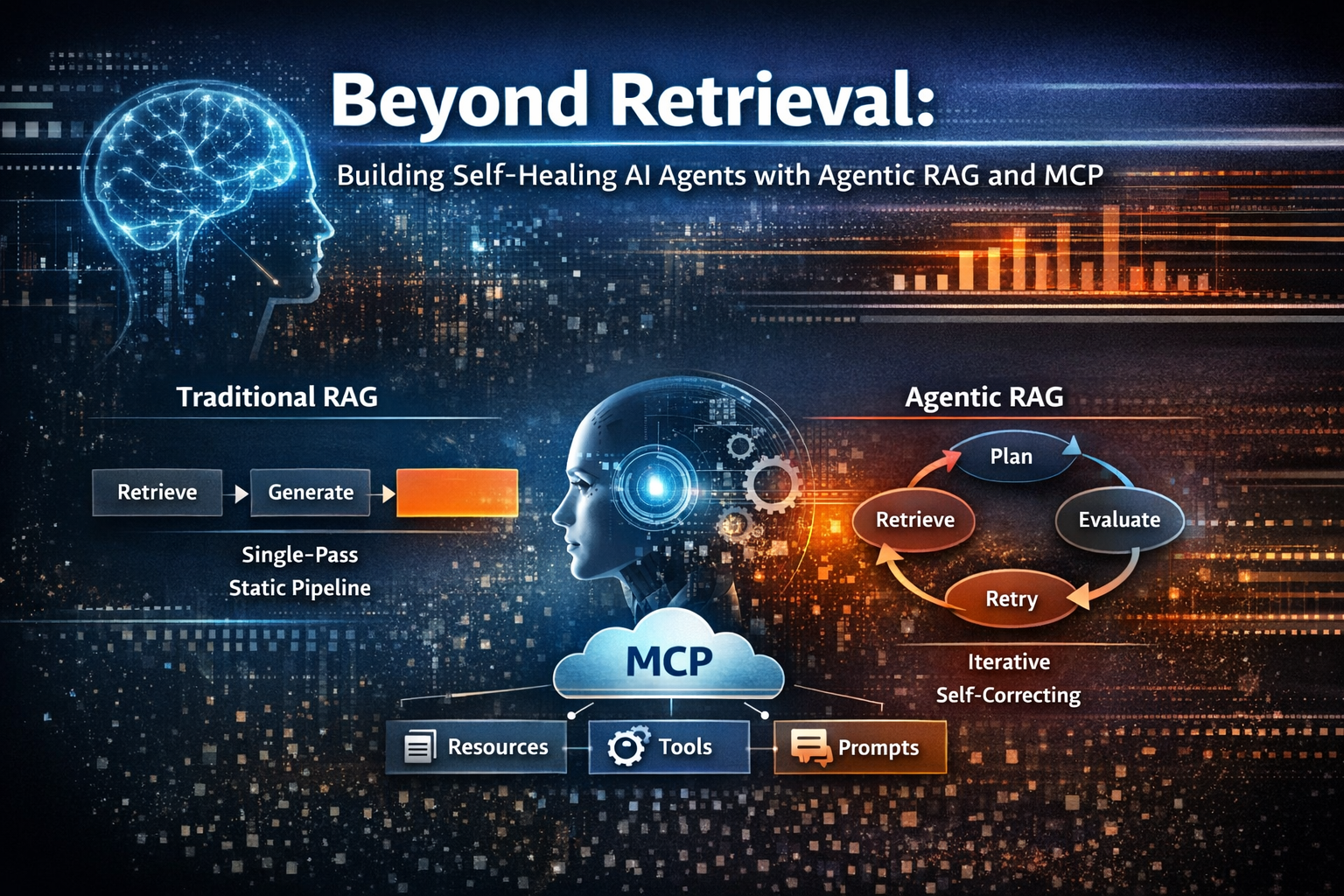

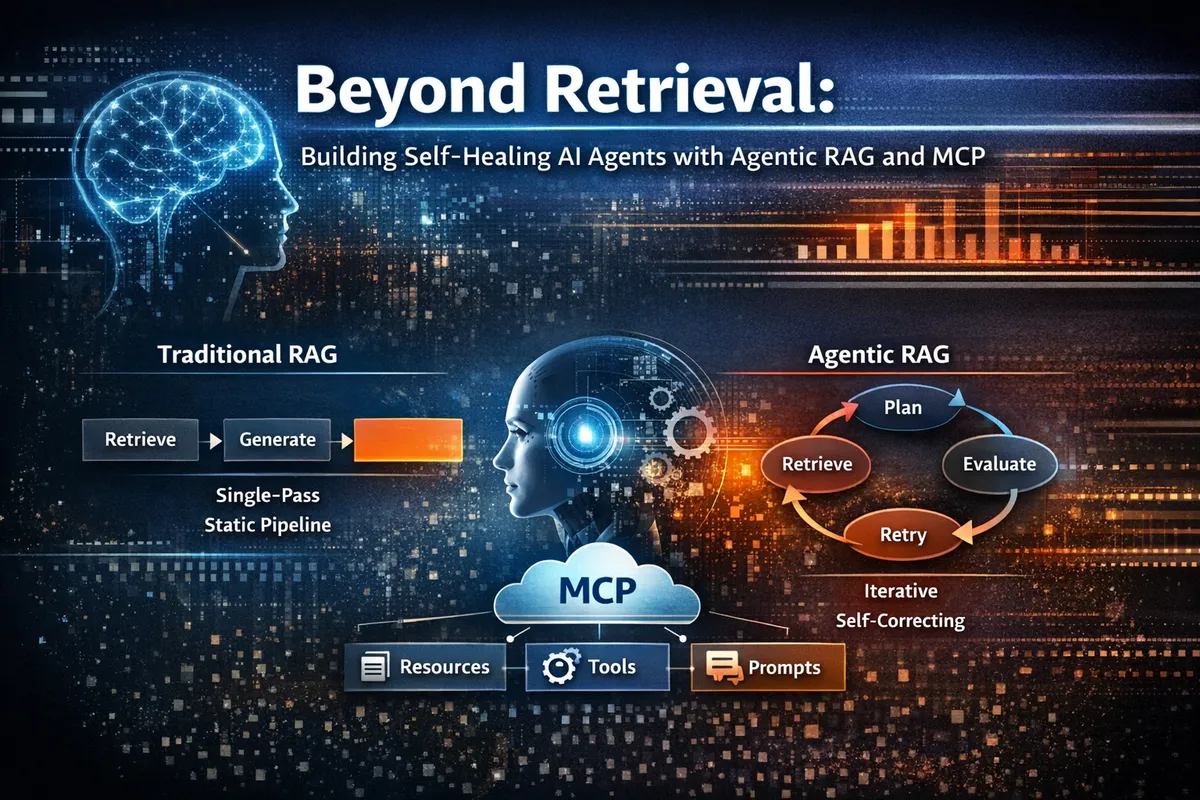

Beyond Retrieval: Building Self-Healing AI Agents with Agentic RAG and MCP

Introduction: From Static RAG to Living Systems

Retrieval-Augmented Generation (RAG) marked an important milestone in grounding large language models in external knowledge. By coupling generation with retrieval, it reduced hallucinations and improved factual accuracy. However, as AI systems moved from demos into production, the architectural limits of traditional RAG became impossible to ignore.

Classic RAG pipelines are fundamentally stateless, single-pass, and deterministic. A query is embedded, a vector search retrieves top-k chunks, those chunks are appended to a prompt, and the model generates an answer. This approach works for simple fact lookups but breaks under real-world complexity: dynamic systems, multi-step reasoning, long-running tasks, and unreliable dependencies.

In 2026, the challenge is no longer “How do we retrieve documents?” but “How do we build AI systems that can adapt, recover, and operate reliably?”

The answer lies in Agentic RAG combined with the Model Context Protocol (MCP).

Why Traditional RAG Fails in Production

Traditional RAG systems fail not because of weak models, but because of rigid architecture.

Single-Pass Retrieval

RAG performs exactly one retrieval per query. There is no mechanism to assess whether the retrieved documents are sufficient. If retrieval fails—due to vocabulary mismatch, poor chunking, or embedding drift—the system proceeds anyway, producing confident but incorrect answers.

Fixed Retrieval Parameters

The same top-k, similarity thresholds, and embedding models are applied to every query. Simple questions are over-retrieved; complex investigative queries are under-retrieved.

Static Knowledge Assumptions

Traditional RAG assumes knowledge bases are relatively static. In reality, APIs evolve, dashboards change, logs stream continuously, and documentation lags behind implementations.

No Feedback Loops

There is no evaluation of answer quality feeding back into retrieval. The system cannot detect hallucinations, contradictions, or missing context.

The result is brittle automation that fails silently—an unacceptable property for production systems.

The Agentic Shift: Retrieval as an Active Process

Agentic RAG reframes retrieval as goal-directed behavior rather than a preprocessing step.

An agent:

- Interprets intent

- Plans information gathering

- Executes retrievals and tool calls

- Evaluates sufficiency and quality

- Adapts strategy when needed

This mirrors how experienced engineers investigate problems—iteratively, skeptically, and adaptively.

Mental Model: Agentic RAG as a Control Loop

The most useful mental model for Agentic RAG is a self-healing control system, not a pipeline.

- Planner decides what to do next

- Executor performs retrievals or actions

- Observation layer evaluates outcomes

- State store persists context and artifacts

- Feedback loops re-enter planning

Traditional RAG is open-loop. Agentic RAG is closed-loop by design.

What “Self-Healing” Actually Means

Self-healing is not a buzzword—it is an engineering property.

A self-healing agent can:

- Detect failure via telemetry

- Diagnose failure type

- Recover using bounded strategies

- Escalate when autonomy ends

Key signals include retrieval coverage, relevance scores, hallucination likelihood, latency anomalies, and cost spikes. Recovery strategies are chosen based on failure type, not blind retries.

Feedback Loops at Multiple Timescales

Robust agentic systems implement layered feedback loops:

- Inner loop (seconds): relevance grading → re-retrieval

- Intermediate loop (minutes): answer validation → strategy tuning

- Outer loop (days): telemetry → architectural change

This hierarchy prevents thrashing while enabling continuous improvement.

MCP: The Standardized Backbone

Agentic behavior collapses without reliable access to tools and state.

The Model Context Protocol (MCP) standardizes how AI systems access:

- Resources (read-only data)

- Tools (state-changing actions)

- Prompts (reusable templates)

MCP decouples reasoning from execution. Agents no longer hard-code integrations; they discover and use capabilities dynamically through a stable contract.

Session Persistence: The Reliability Multiplier

Most failures are state failures.

Sessions expire. Processes restart. Long-running tasks break.

MCP sessions formalize persistence:

- Multi-turn reasoning

- Long-running workflows

- Recovery after crashes

- Learning from history

This mirrors reliable browser session design: checkpointing, retries, and graceful degradation.

Planner–Executor Architecture in Practice

Agentic RAG systems separate thinking from doing.

import asyncio

from mcp_client import MCPClient, ToolSession

from browser_use import Browser

async def research_agent(topic: str):

mcp = MCPClient()

async with ToolSession(

name="research-session",

persist=True

) as session:

browser = Browser(session=session)

page = await browser.search(topic)

await page.open_first_result()

findings = await page.extract_text()

await mcp.store(

key="research_notes",

value=findings,

metadata={"topic": topic}

)

return findings

asyncio.run(research_agent("OAuth 2.1 backward compatibility"))The session—not the model—is what makes this reliable.

High-Value Use Cases

Automated Auditing & Compliance

Agents log into dashboards, extract reports, detect schema drift, and retry when layouts change.

Incident Response & Log Analysis

Hybrid retrieval with self-correction dramatically reduces mean-time-to-resolution.

Engineering Research Assistants

Multi-source synthesis with confidence scoring replaces manual document hunting.

These systems don’t just answer questions—they operate.

From Prototype to Production

Self-healing agents require maturity stages:

- Prototype

- Robustness engineering

- Scale and cost optimization

- Observability and operations

- Continuous improvement

Skipping robustness engineering is the most common failure mode.

The Verdict: Toward Intent-Based Computing

RAG answered: What do we know?

Agentic RAG answers: What should we do next—and what if it fails?

With MCP and self-healing control loops, we move toward intent-based computing—systems that understand goals, manage state, and recover autonomously.

This is not about better prompts.

It’s about engineering for failure.